Charge Ahead: Intelligent Battery Management for Optimal Design

March 7, 2023

Lithium-ion (Li-Ion) batteries are common in rechargeable tools, handheld devices and E-mobility. Li-Ion has many benefits over other battery chemistries in these and many more applications but be aware. There are pitfalls to avoid in charging and discharging regimes to achieve a safe and long, reliable life. In this blog post, we discuss the challenges and identify the ideal solutions. We also introduce a new, integrated, intelligent battery management, single-chip solution that provides unique features and a high level of control for maximum run time, flexibility and safety.

Battery technology is more than 200 years old and counting

Batteries have been around since 1800 when Italian Alessandro Volta invented his ‘voltaic pile’ with copper and zinc discs separated by brine-soaked cardboard. But these were ‘primary cells’ that degraded quickly and couldn’t be recharged. It took another 59 years before rechargeable or ‘secondary cells’ were invented with their lead and acid construction. The scene was then set for the development of battery technologies – resulting in today’s long talk-time cell phones, powerful cordless hand tools and electric vehicles (EVs). All provide decent range—from e-bikes to electric supercars. Legislation is also a driver. For example, California may outlaw sales of new gas-powered garden machinery by as early as 2024, with cordless electric versions being the only sensible alternative.

Lithium-ion is the chemistry of choice for modern devices

For best overall performance, lithium-ion is now the most popular battery type used in mobile, rechargeable applications. Why? Because this battery technology has a long service life, a high energy storage-to-weight ratio and is more cost effective when compared to lead-acid batteries. Li-Ion cells also have other useful characteristics such as no ‘memory’ effect, low self-discharge and a single cell at 3.8 V can power many designs.

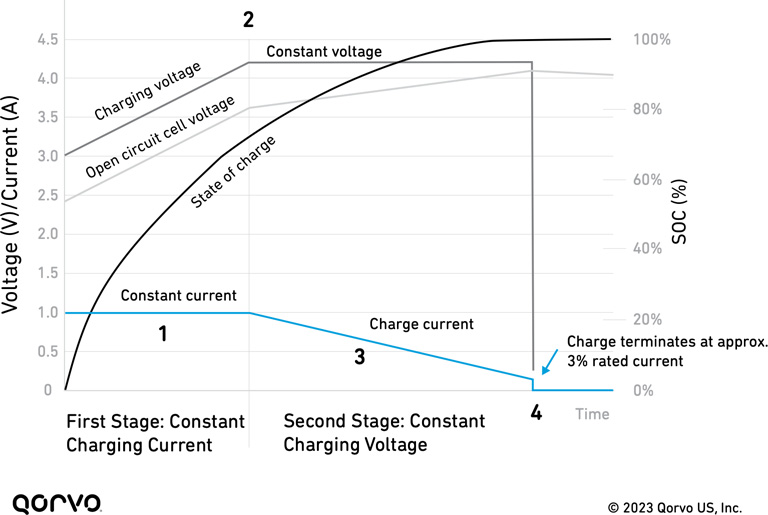

It’s not all good news though. Li-Ion cells must be securely protected against excessive voltage, too high charge or discharge current and deep discharge, along with cell over-temperature. When these criteria are not met, there is the possibility of explosion or fire. The ideal, safe charging regime for an amp hour (Ah)-rated cell is shown in Figure 1 below.

The typical charge process of a lithium-ion battery goes through several stages, referenced in the figure:

- A constant current at ’1C’ is applied, which brings the battery state-of-charge to around 70%.

- The cell voltage reaches 4.2 V and the charger switches to constant voltage mode.

- The current then drops as the battery saturates.

- A full charge of a Li-Ion battery is reached when the current decreases to 3%-5% of the Ah rating of the cell for traditional cobalt-blended types. Once this threshold is met, the charge process is terminated to avoid cell degradation.

Figure 1. Lithium-ion battery charging regime

Charge may restart with a ‘topping-up’ phase if the battery self-discharges below another threshold voltage, and cell temperature is monitored continuously to detect any thermal stress.

Safety is vital, but knowing capacity and health are also important

Safety is the prime concern when managing battery charge and discharge. Because Li-Ion is often used in highly uncontrolled environments such as gardens and home workshops, protection methods must be robust. Additionally, a tool or machine left unused during the cold winter months should retain its charge as much as possible, ready to instantly and safely operate at maximum power when the grass grows or the home project urge kicks in.

For applications requiring higher power, such as riding lawnmowers, product designers are pushing the battery voltage higher to extend capacity and run time while keeping currents manageable. Strings of up to 20 cells generating 90 V or more are now common. This adds to the associated dangers of shock, voltage breakdown and high energy release on failure. Series connection also means that the entire pack run-time and life depend on the weakest cell. To counter this, any charging system should ensure that the regime balances the stress on each cell—with some way to force charges to be equal.

Another major concern is knowing the state of charge (SOC) or ‘gas-gauging’ of the battery with good accuracy. It’s a minor annoyance when a cordless drill unexpectedly dies when it still shows it has a charge. But when an electric golf cart or ride-on mower unexpectedly stops in the middle of the fairway, it’s a much bigger deal. SOC indication is challenging, as cell voltage is not a good measure and depends on temperature and remaining charge.

One of Li-Ion’s desirable characteristics is that its voltage on discharge stays relatively flat up to exhaustion, which doesn’t help as an indicator. A solution is to actually measure the charge introduced and taken, so-called ‘coulomb counting.’ But cell phone users will know that this technique can lose calibration, and the recommendation is that a battery is fully discharged occasionally to ‘reset’ calculations.

State of health (SOH) is also a useful measure. The capacity of Li-Ion cells reduces over time and with the number of charge/discharge cycles. This causes a 100% indicated charge to progressively signify less capacity and run time. Again, cell voltage is not a good measure of SOH, but internal resistance can be an indicator, inferred from voltage changes with load steps.

Integrating battery management adds functionality and value

Given the complexity of Li-Ion battery charge control and monitoring requirements, an attractive solution is an integrated and intelligent battery management system (BMS). This can be implemented as a compact and cost-effective IC with mixed analog and digital technology, including a processor, memory and interfaces. With this computing power, the IC enables the ability to add advanced functionality and features. Examples of these advancements include battery state-of-health determination, cell balancing control, precision coulomb counting, historical data logging and more. Wired and wireless communications could also be incorporated—something increasingly expected in our connected world.

An integrated BMS can have multiple processor cores to accommodate different applications, with the Arm Cortex M0 clocked at 50 MHz or the faster M4F at 150 MHz being popular choices. The M4F has 128 kB flash memory and 32 kB SRAM, four times that of the M0, and more general-purpose I/O pins, while the floating-point processing helps with advanced algorithms. Both versions offer UART (Universal Asynchronous Receiver/Transmitter), SPI (Serial Peripheral Interface) and I2C/SMBus interfaces, while the M4F also has CAN (Controller Area Network).

Designers will likely be familiar with ARM® devices and their programming tools, which can be customized on top of any pre-existing embedded firmware for general-purpose functionality. For instance, fault events could be logged in SRAM for diagnostics, or data tables could be generated to analyze charge/discharge performance and enable predictive maintenance for SOH.

An integrated BMS requires analog interfaces that include battery current, temperature, and individual cell voltage monitoring with appropriate ADC (analog-to-digital converter) resolution and accuracy and could monitor up to 20 cells for wide applicability. To ensure safety and efficiency, the device should have current and fault sensing that triggers a fast-acting hardware shut-down to prevent delays and stress to the battery or BMS. The current sense interface could have programmable gain for accurate measurement across a wide range of battery currents, using a low-value sensing resistor in the milliohm range for minimal dissipation and compact size.

The BMS typically uses an external power MOSFET with a back-to-back configuration to control the charging current and the interrupt discharge current when necessary under overload or short-circuit conditions. Placing the MOSFETs on the positive rail, or 'high-side,' is preferable for system communications and for other system considerations. This means that if commonly available N-channel types are used, the gate on-drive voltage must be higher than the supply from the charger or battery. However, this can be generated in an integrated BMS with a charge pump circuit. Similarly, the BMS IC could include buck and linear regulators to produce internal and external auxiliary power rails.

An example might be 3.3 V for a low-energy Bluetooth® module, where the management system must include all these features but still have low power consumption. Even when the end-equipment is off and stored, the BMS needs to be in hibernate mode with a basic level of cell voltage monitoring. In this state, the current draw should be no more than a few microamps to avoid excessive battery discharge.

The easy route to a BMS solution

All of the desirable features of a Li-Ion battery management system described are available on a single-chip, integrated solutions from Qorvo in their Power Application Controller range. Two devices are initially available—the PAC22140 and PAC25140 using the Arm M0 and M4F cores, respectively. These devices support up to 20 cells with the associated high-voltage drive and monitor ratings. Firmware is included for comprehensive charge/discharge control and monitoring, along with algorithms for coulomb counting and a 50-mA cell balancing capability. Full Qorvo support is available with software and hardware development kits, a windows GUI for configuration and monitoring, and full documentation.

Check out Qorvo Power Solutions and explore an entire ecosystem of integrated controllers and discrete parts for the design of portable and mobile equipment, not just for battery management but also for motor control and general power conversion and management.

The Bluetooth® word mark and logos are registered trademarks owned by Bluetooth SIG, Inc. and any use of such marks by Qorvo US, Inc. is under license. Other trademarks and trade names are those of their respective owners.

Have another topic that you would like Qorvo experts to cover? Email your suggestions to the Qorvo Blog team and it could be featured in an upcoming post. Please include your contact information in the body of the email.